Daichi Yashima

Hi, I’m Daichi Yashima, a Ph.D. student at Keio University advised by Prof. Komei Sugiura. I work on robotics, large-scale foundation models, multimodal language understanding, and embodied AI systems that can execute complex tasks in the physical world.

📝 Research Interests

• Foundation Models: Vision-Language Models, Vision-Language-Action Models

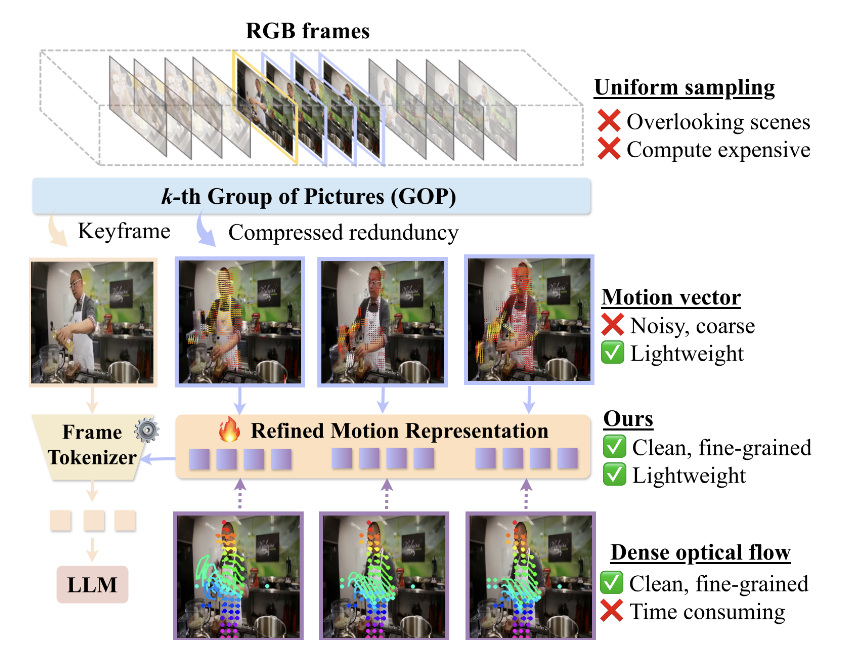

• Multimodal Language Understanding: Video Understanding, Self-supervised Learning

• Embodied AI: Service Robots, Mobile Manipulation

📰 News

• [2026/03] Our paper has been accepted to ICPR 2026

• [2026/02] Our papers have been accepted to CVPR 2026 and CVPR 2026 Findings

• [2025/03] Our paper has been accepted to IEEE RA-L

• [2025/02] Our paper has been accepted to IEEE RA-L

📄 Selected Publications

CVPR 2026 • 2026 (Acceptance Rate: 25.42%, h5-index: 450)

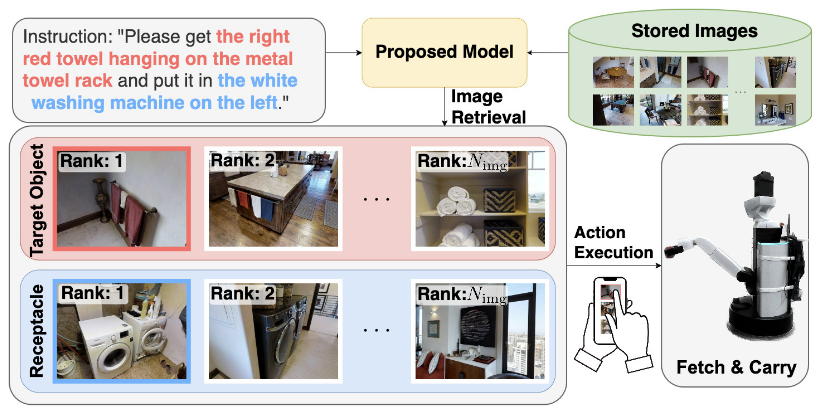

Open-Vocabulary Mobile Manipulation Based on Double Relaxed Contrastive Learning With Dense Labeling

IEEE RA-L • 2025 (IF: 5.2, h5-index: 132)